The Hidden ROI Killer in AI Projects: Starting With the Solution

Most AI investment failures are decided in the first two weeks. This is how you ensure those two weeks are used wisely.

Founder & Senior AI Strategy Consultant, Prosperaize

.jpg)

Most AI investment failures are decided in the first two weeks. This is how you ensure those two weeks are used wisely.

Founder & Senior AI Strategy Consultant, Prosperaize

.jpg)

An e-commerce organization came to me with a clear request: build a cart abandonment prediction model. The idea was to identify baskets at risk of abandonment early enough to trigger an automatic discount. The scope was defined, the use case was concrete, and the technical approach was already mapped out. When I asked what metric they were trying to move and why customers were abandoning carts in the first place, the conversation stopped. Nobody had asked that question. What we found, after a short analysis, was that customers with delivery windows of five or more working days were abandoning at significantly higher rates than those with faster delivery. No prediction model would have changed that. They didn't need AI. They needed a better delivery program.

The model would have been built. The discounts would have shipped. And the abandonment rate would have stayed roughly where it was - because the intervention was targeting the wrong cause.

The most common situation we find when an organization is considering an AI initiative: they've already decided what they're building, and more often than not, they've also decided on the technical approach.

Not what problem they're solving. Not what metric they want to move. What they're building.

It might be a chatbot. A recommendation engine. A document processing pipeline. A "co-pilot" for some internal workflow. The technology is chosen. The feature is already in the backlog. The sprint is already planned. Someone's been prototyping it in a side branch.

The business case is being assembled around the decision, not before it.

This is the pattern. And it is the single most reliable predictor of whether an AI initiative will fail.

In 2025, more than 40% of companies abandoned most of their AI initiatives (S&P Global). MIT's research on enterprise generative AI found that 95% of projects failed to deliver meaningful business impact. Gartner projects that 30% of AI POCs will be abandoned before reaching production, and over 40% of agentic AI projects will be canceled before the end of 2027.

The dominant narrative blames technology complexity, data quality, or organizational resistance. All of these play a role. But the underlying cause is simpler and more preventable: the sequence is wrong.

Most organizations start with a solution. They should start with a metric.

Before going further: if your organization is in pure exploration mode - genuinely curious about AI but without a specific business problem to solve or a P&L pressure to address - this process will feel premature. That's fine. This article isn't for you yet.

This is for organizations that already have a business goal in mind, that are considering an AI investment to advance it, and that want a structured way to decide where and how to invest before committing a real budget.

If that's you, read on.

Every business function exists to move something measurable. Revenue, cost, time, quality, risk - these are the dimensions business operates in. Every initiative an organization undertakes should connect to a specific goal, expressed in one of these dimensions.

AI is not an exception. It is not a category on its own, calling for the normal business logic to be suspended. AI is an investment, and like every investment, it needs to be tied to a specific, measurable outcome.

Ron Kohavi - who spent years running controlled experimentation programs at Microsoft and Amazon, and whose book Trustworthy Online Controlled Experiments is the definitive text on metrics-driven decision making - calls this the Overall Evaluation Criterion: a single agreed-upon metric that defines what "better" means before any initiative begins.

Eliyahu Goldratt put the underlying principle more bluntly: "Tell me how you measure me, and I will tell you how I will behave."

Neither was talking about AI. Both were exactly right about it.

The goal of this step is not to find a metric that justifies the solution you've already chosen. It is to define what needs to be changed before devising the best way to do it.

Good metrics for AI investment decisions share four characteristics:

1. They are specific and measurable. "Improve operational efficiency" is not a metric. "Reduce average customer support resolution time from 11 minutes to under 4 minutes" is.

2. They connect directly to a business outcome. Revenue generated, cost reduced, time compressed, error rate decreased, churn prevented. Not "better customer experience." What does better mean, in a number?

3. They are attributable. You need to be able to tell whether a change in this metric was caused by your AI initiative or by something else. This matters more than most teams expect.

4. They are movable by the intervention you're considering. Some metrics are real and important but are too far downstream to be moved by a single AI initiative. Know the causal chain before you commit.

The cart abandonment example that opens this post is a failure of characteristic four. The metric was real. The initiative was coherent. The intervention couldn't move it - because nobody had traced the causal chain before committing the budget.

With a clear goal and a measurable metric, the next question is: how do we get there? What kind of AI do we build? Where in the business does it land?

Most organizations skip this question. They go directly from "we want to improve X" to "we'll build a [specific solution] by leveraging this [specific AI technology] to improve X."

The problem is not the team. It's the motion.

Product companies are trained to ship features. That is a known planning motion - identify user needs, scope a solution, add it to the roadmap, allocate engineering, ship, measure. It is a workflow optimized for building things that solve problems you've already validated.

AI features are not a roadmap decision. They are a business design decision. The question is not "how do we build this?" The question is "what metric do we want to move, how would we know we've moved it, and what category of solution could plausibly do it?"

Reverse-engineering from a business metric to a feature category is not a muscle most product teams have developed. So they substitute the motion they have - scope and ship - and start with the solution. The metric gets defined to fit the feature, not the other way around.

The data support both readings. S&P Global reported that 42% of companies abandoned most of their AI initiatives in 2025 - up from 17% the year prior. This spike is not explained by AI technology getting harder. It's explained by organizations scaling up initiative volume without scaling up the rigor of how initiatives are selected and scoped.

Here is the structural tension at the center of every AI initiative, and it is almost never named explicitly in the early conversations.

You are trying to move a deterministic business metric with a non-deterministic instrument.

A product owner commits to shipping an AI feature in eight weeks. The sprint is planned. The engineer has a working AI solution. Week eight arrives - and the solution is running. It's also producing outputs that are sometimes wrong, sometimes inconsistent across runs, and occasionally surprising in ways nobody anticipated.

The PO assumed "working" meant the same thing it means for software. It doesn't.

When you write a feature that calculates a discount, the calculation is deterministic. Same inputs, same output, every time, indefinitely. When you build an AI feature that does something analogous - recommends a discount, suggests a response, generates a summary - the output is probabilistic. The model will be right most of the time. Not all of the time. And not in the same way every time.

This is not a bug. It is a fundamental property of how AI systems work. But it has direct consequences for how you plan the feature, what you promise at the sprint review, and what "done" actually means.

In The Real Reason 90% of AI Projects Fail, I described this as the Language of Uncertainty - one of five languages AI demands that software projects never had to speak. The most important sentence in it: "You cannot plan accuracy improvements the way you plan feature sprints." Most product teams learn this the hard way.

I’ve seen this many times than I should have: an AI engineer three months into running the same experiment with different threshold settings, trying to reach an accuracy number that was chosen by someone who has never trained a model. The PM committed 90% to the executive board. The engineer is at 81% and out of ideas that haven't already been tried. Nobody lied. Nobody was incompetent. The sequence just created an impossible situation - a fixed target, set before anyone understood what was achievable, inherited by the person least able to renegotiate it. Missed deadlines. An unhappy board. Friction between the PM who made the promise and the AI engineers who couldn't keep it. These are the costs of a conversation that never happened in the planning sessions. The internal version of this failure is a sprint review. The client-facing version is a renewal conversation.

The same failure plays out externally with higher stakes. I built a document processing pipeline for a B2B platform: reports based on 50-page documents, structured extraction, and reliable factual output. The system was performing well. An executive ran the pipeline on the same document twice, noticed the phrasing differed between runs, and called the auditor at midnight. By morning, the system was under review. We spent the next week in remediation: walking through the architecture, explaining that the variance was in language, not in facts - that the structured output layer was stable, but language models don't reproduce phrasing identically by design. The system had performed exactly as built. But I had never told anyone what to expect. In a compliance context, unexpected behavior and wrong behavior are the same thing. A midnight call to an auditor is an expensive way to learn to set expectations up front. Named upfront, non-determinism is a design constraint. Discovered in the sprint review, it's a rollback.

With a business goal and a metric agreed upon, the next question is: which part of the product - which user flow, revenue stream, or operational process - benefits most from AI investment first?

Not every product area moves the same metric equally. Not every area has usable data. Skipping this step means you're allocating engineering time based on who lobbied hardest in the last roadmap session, not where the impact potential is highest.

Before scoring anything, identify which of the following gain types the AI investment would primarily deliver. Each has a different owner, a different time horizon, and a different connection to your target metric.

If you're the CEO running this exercise, two conversations need to happen before you score anything. Your CPO should tell you which product areas are strategically prioritized in the current planning horizon - their input determines how you weigh the "streamlined product" and "core service" dimensions against the others. Your CFO should tell you which gain types have a direct path to P&L now - their input prevents you from scoring "new business opportunity" highly in a quarter where the board metric is cost reduction, not growth. Run this exercise without those two inputs, and the matrix reflects room energy, not business logic.

At Prosperaize, we run this as a scored working session with the founding or leadership team. Each product area gets assessed on three dimensions:

The combination produces a prioritized shortlist - grounded in business impact and implementation feasibility, not in product roadmap politics.

What makes the session valuable is not the scores. It's the disagreements the scores surface. When the CPO scores a product area's data availability as 4 and the ML engineer scores it as 2, that gap is the insight. The CPO sees user events flowing through the analytics platform. The ML engineer sees that none of those events are labeled for the task at hand. Both are right about something different - and that gap, surfaced here, costs nothing. Discovered in a sprint, it costs a quarter.

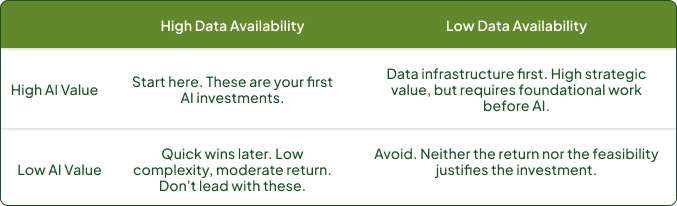

The resulting quadrants:

One pattern I've seen consistently: engineers overestimate the usability of their event data ("we track everything"), and product leaders overestimate how quickly raw behavioral data translates into a trainable signal. Surfacing that gap in this session costs an afternoon. Discovering it in week six of a sprint costs a quarter.

You've identified the product area and the type of gain it delivers. What remains are the two decisions that determine whether your AI initiative survives contact with production.

The first: translating your gain type into a set of candidate AI patterns - concrete approaches that experienced teams have validated for this category of problem. This is where the exercise stops being purely strategic and becomes technical and product-based. It requires a structured working session with product, design, engineering, AI, and delivery in the room.

The second: deciding which of those candidates to build first. Not every high-impact use case is worth pursuing first, and not every low-complexity use case is worth pursuing at all. The instinct is to pursue everything that looks promising. The result is predictable - too many AI use cases are scoped in parallel, complexity is underestimated across all of them, and a portfolio of incomplete prototypes that never reach production.

Good news for you! We've built the frameworks that can guide you towards making both decisions - a gain-type-to-pattern mapping and a gains vs. risk/complexity scoring matrix - and made them available as a downloadable toolkit.

The quality of what comes out of this process is directly proportional to the breadth of AI experience in the room.

Organizations that have attempted AI before - even if those attempts failed - generate significantly better proposals than organizations starting from scratch. They've seen what doesn't survive the POC stage. They've seen what breaks in production that looked fine in the prototype. They know which categories of use cases consistently underestimate integration complexity. That pattern recognition changes what they propose.

The constraint for organizations starting fresh is not ambition. It's the inability to know what's possible.

You cannot propose what you cannot imagine. When a team has never seen a recommendation model degrade silently in production because the training data distribution shifted, they don't scope for monitoring - and they find out six months later when conversion drops. When a team has never seen a fine-tuned classifier fail on edge cases, it was never shown, they don't build an escalation path. When a team has never seen an AI agent execute a complex multi-step workflow across multiple tools, they don't include it in the opportunity map - not because it's infeasible, but because it's outside their frame of reference.

The trap isn't always inexperience. Sometimes it's depth without breadth - and that produces a different failure mode.

I worked with an AI engineer with five years of experience, concentrated in two narrow domains: computer vision and graph neural networks. When a public transport client came with a task of recommending new routes, she proposed adapting graph neural networks to model the route structure and act as recommender systems for new routes. Five points for creativity - routes are graph structures, and the logic wasn't wrong. Zero points for risk awareness. GNNs for this use case would have been expensive and extremely risky to try to build, difficult to explain to a transit authority, and disconnected from the business question the client was actually asking: not "which routes are topologically interesting?" but "which routes generate enough ROI to justify the cost of running them given driver availability?"

In our internal alignment session, we reframed the problem. We built ML models that predicted route ROI against operating cost and driver availability - something the client could act on directly. On top of those, an LLM layer drafted weekly and monthly performance reports from the model outputs, removing a manual reporting task the operations team was spending significant time on each cycle.

The engineer had the experience. What she lacked was the exposure to enough different types of problems to recognize when her strongest tool wasn't the right one for the business question. That gap in breadth is exactly what external pattern recognition is designed to cover.

This is the primary reason external partners change the quality of this process. Prosperaize's value at this stage is not facilitation - it's bringing pattern recognition from multiple initiatives, industries, and failure modes into the scoping conversation. The proposals that emerge are shaped by what has been validated to work across those situations, not just by what sounds technically plausible.

The breadth of AI experience a team has access to when formulating a strategy is a leading indicator of whether that strategy will survive contact with implementation.

What the above-referenced process ultimately enforces is a shift from building what sounds valuable to validating what actually is. It replaces momentum with intent.

Finally, ask yourself if you are addressing the hard questions sitting just beneath the surface. Where in your current thinking are you assuming clarity that hasn’t been tested? Which parts of the problem are understood through data, and which through intuition or prior experience? Are you committing to a target before understanding what is realistically achievable - and who carries that risk when reality doesn’t match the plan?

At this stage, discipline matters more than speed. Because once execution begins, the system will simply expose the quality of the decisions made upfront. And by then, the cost of learning what you should have asked earlier is no longer only theoretical.

If you want an external perspective on this process - someone who has run it across multiple industries and failure modes - that's what Prosperaize's Prosperity Audit is. [link]"

Dušan Stamenković is the founder of Prosperaize, an AI Asset Management Consultancy. He advises organizations on whether, where, and how to invest in AI - reducing risk and maximizing return across the AI investment lifecycle.